In a recent interview, linguist, Noam Chomsky, repeated his claim that there must be some innate feature of human beings that makes it possible for them to acquire natural languages:

“No one holds that the rules of language are innate. Rather, the faculty of language has a crucial genetic component. If that were not true, it would be a miracle that children acquire a language. That is obvious from the first moment of birth, when the child begins to pick out linguistically relevant information from the noisy environment, then following a predictable course of acquisition which, demonstrably, goes far beyond the evidence available, from the simplest words on to complex constructions and their interpretations. An ape with essentially the same auditory system, placed in the same environment, would detect nothing but noise. Either this is magic, or there is an innate component to the language faculty, as in the case of all other aspects of growth and development.”

Chomsky is not always clear about what he thinks is innate. Does Chomsky think that humans have innate knowledge of anything? Is it an innate ability? If the latter, how could anyone disagree? It seems obvious that human beings have an innate ability to acquire natural languages and that this is a unique ability of the human species. If the former, then it is unclear what makes Chomsky deny that the rules of grammar are innate. Sometimes Chomsky sounds like he thinks human beings have propositional knowledge of the rules of grammar. For example, in Knowledge of Language, he writes, “it seems reasonably clear…how unconscious knowledge issues in conscious knowledge” (p. 270). Devitt suggests that Chomsky is guilty of conflating innate ability with innate knowledge of propositions (Devitt, Ignorance of Language, 246).

Fiona Cowie argues that the crucial component of Chomsky’s theory is that human beings have a domain-specific learning faculty that enables language acquisition to take place. In contrast, empiricists deny that human learning faculties are domain-specific. What we must have, empiricists suggest, is a general ability to learn. We use the same facility to acquire any kind of knowledge (Fiona Cowie, What’s Within?).

What Chomsky means by ‘crucial genetic component’ is a feature of human brains, genetically inherited, and the consequence of an evolutionary leap. Chomsky arrives at this conclusion as a result of trying to supply a theory to explain the fact that human language learners (children) pick up languages without sufficient experience. Chomsky assumes that there must be some other cause that produces a linguistically capable human being. He argues both that the available experiences are too few–not enough information is given to a language learner–and that they are too many (there are so many non-linguistic inputs available)

The latter problem was raised by Karl Popper. Popper argues that any causal series has a beginning—the entity causing the representation—and an end—the mental state representing the entity. The problem Popper highlights is that given the mass of options for inputs from a particular environment there is nothing that can determine which particular bit of the environment triggers the series. If so, then one needs to appeal to some other factor apart from the causal series to determine which bit of the environment is the beginning of the chain (and, mutatis mutandis the end of the series). According to Popper, there is a set of mind-dependent factors such as purpose and interest that determine what, out of an incredibly complex and large amount of environmental inputs ought to be the thing to think about (Karl Popper, Conjectures and Refutations; the Growth of Scientific Knowledge, 395–402).

The former problem is commonly called the ‘poverty of stimulus argument’. The argument suggests that Children ‘sample’ a finite set of sentences and from that set are able to construct novel sentences. The finite set might include three well-formed sentences and infer that a fourth sentence is also well formed. However, if the fourth sentence is not well formed, there is no negative evidence to show that it is not admissible.

Cowie suggests (i) “it is likely that John will leave” (ii) “John is likely to leave” and (iii) “it is possible that John will leave” as the three candidate sentences. Sentence (iv) is “John is possible to leave.” If there is no evidence given to the learner that rules out (iv), the learner is justified in continuing to use the sentence. But no one does. In fact, we universally rule it out. The task posed by the logical problem is to explain why we do so. Chomsky posits that we have some innate ability to recognize (iv) as incorrect.

Both of these arguments taken together imply at least that there is more to language acquisition than a general ability to learn. If our ability to learn language is only part of a general ability, then what accounts (a) for the lazer-like focus of a learner on language inputs and (b) for the ability to rule out deviant sentences despite lacking apparent criteria for doing so? According to Chomsky, only an innately possessed domain-specific faculty can explain language acquisition in human beings.

It is difficult to find fault with Chomsky’s observation that language acquisition is miracle-like. In fact, I have always thought that the fact that we humans can learn anything at all is miraculous! Even the chief opponents of Chomsky’s theory grant that if there is no innate, domain-specific language module complete with its own universal grammar, then language acquisition is a mystery – we simply do not know what makes human learning possible. Perhaps, say empiricists, at some point in the future, we will discover something about our brains that will explain the miracle.

However, there are a few difficulties with Chomsky’s theory. First, if we have a domain specific language learning faculty, then what explains our ability to learn other things? Surely, learning to drive, cook, construct buildings, draw, sing, and all the other things we know how to do require their own domain-specific learning faculties. But this seems implausible. As Putnam argues:

“If Chomsky admits that a domain can be as wide as empirical science…, then he has granted that something exists that may fittingly be called ‘general intelligence’… On the other hand, if domains become so small that each domain can use only learning strategies that are highly specific in purpose…, then it becomes a miracle that these skills… were not used at all until after the evolution of the race was complete” (Hilary Putnam, in Language and Learning, ed. Massimo Piattelli-Palmarini, 296).

One might reply that domains evolve in the same way organs evolve. One would have to postulate that at every major intellectual discovery (nuclear physics, mathematics) there is a corresponding evolutionary leap producing a domain-specific learning module. As Putnam points out, the number of organs are limited, but the number of domains is ‘virtually unlimited.’ Isn’t it more likely, Putnam suggests, that we have a general faculty of learning that is applied to novel domains of learning.

A further problem is that if the criteria for the correctness of a grammar is determined by the module we have been given through evolution, then it is difficult to account for the objective nature of it. We tend to think of correctness being a property of the thing that has it. In this case, sentences are well or poorly formed. Analogously, in formal logic, we don’t think that the criteria for well-formed formulas are properties of our minds.

I have recently argued that our ability to recognize good from bad sentences is analogous to a moral conscience. The power in question does not entail a prior knowledge of a set of rules, but the power to recognize objective rules. If so, then the task at hand is how to explain such a power. Surely having the power to recognize something implies a prior knowledge of what to look for even if that knowledge is only tacit. Furthermore, we are able to recognize all sorts of things given only limited information about them. At least on the surface of it, it appears that we would have to know more about what we are recognizing than experience has provided. How can we explain this power?

At this point, the question requires some auxiliary theory to help explain the phenomena. The impasse between the two sides–nativism and empiricism–provokes an appeal to a thesis about reality. What is it about reality that might help? Jerold Katz, a former colleague of Chomsky’s, rebelled against the prevailing linguistic conceptualism at MIT. And there can be no more a rebellious act at an academic institution known for its science than to embrace Platonism! Katz did so and produced several works arguing that language is an abstract object.

Theism is another auxiliary thesis about reality and it would go a long way to explain how human beings learn. It would also do so whilst remaining conceptualist about human language abilities.

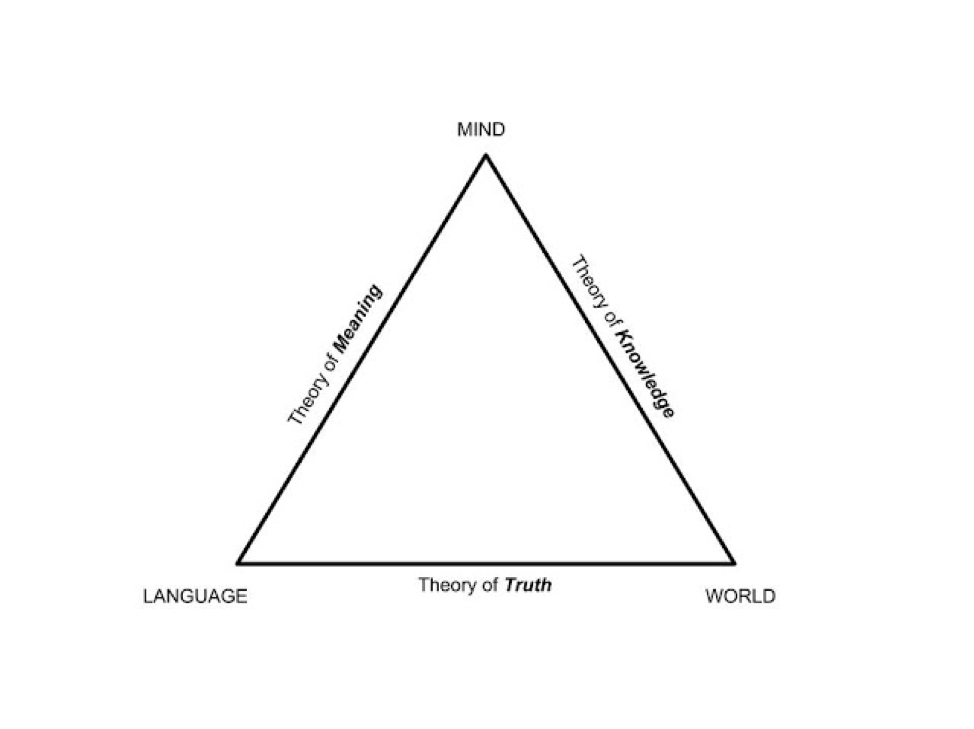

It seems to me that there are four problems to be solved:

- The intention problem – just how do we explain the human ability to pick out words and sentences from the plethora of sensory input?

- The criteria problem – How do we explain the human ability to recognize good and bad sentences without negative evidence for some of the bad ones?

- The justification problem – What justifies our belief in a universal grammar?

- The normativity problem – What is it about reality that would account for the objective normativity?

Problem (1) implies that human mental acts are designed. We are built in such a way as to be able to attend our minds on something rather than something else. If God exists and created human beings, then human beings would be designed to pick out words from background noise.

Problem (2) requires an innate knowledge of some universal grammar, but why suggest that this knowledge is within a human mind? As some philosophers suggest, the mind’s ability to test sentences for grammaticality would require enormous prior knowledge. If so, then an infinite mind would know it.

Problem (3) is a problem facing all naturalistic nativist theories. Just because something is in the head doesn’t make it right. If necessary truths are innately known, they are justified only if there is some reason to think them true. But, if naturalism is true, one is wise to wonder how. Nativists like Descartes suggested that the answer is God. He had a point. Without a divine mind who cannot be wrong about anything, innate knowledge in human beings is merely innate belief.

Problem (4) arises when one reflects on the human ability to recognize good and bad sentences. Chomsky clearly accepts that humans are able to weed out offending strings of words. He thinks that our underlying grammar (UG) is normative. But what explains our ability to recognize normativity? Analogously, we recognize actions are good and bad. Many philosophers, such as Richard Swinburne, have argued that such an awareness is best explained by the existence of God. If naturalism is true, however, it becomes much more difficult to explain normativity in the universe.